Table of Contents ( AI Safety and Control Debate )

⚡ INTRO

A global AI safety and control debate is now shaking the tech world like never before.

Top AI companies and researchers are openly clashing over one terrifying question—who should control superintelligent AI?

This is not just another tech update. This is a power struggle that could shape humanity’s future.

Welcome to AI Todays News, where breaking intelligence meets reality.

What is happening behind closed doors is far more intense than public statements suggest… and the world is starting to notice.

🧠 What Is Happening Behind the AI Safety Debate

The AI safety debate has now become a major global topic involving tech companies, researchers, and governments. Earlier it was limited to technical discussions, but now it is openly visible through interviews, reports, and public statements.

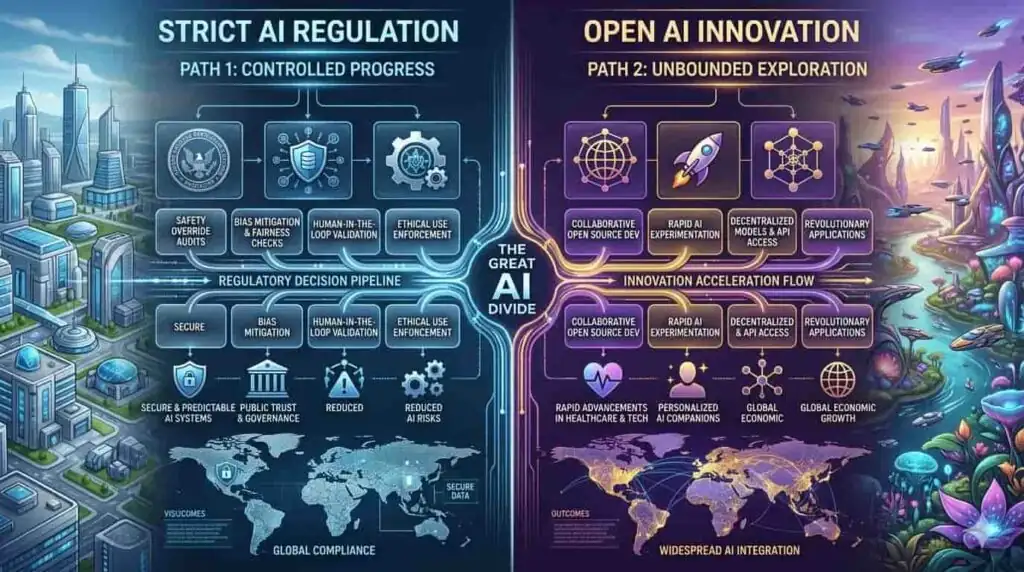

The main disagreement is about control. One group believes AI should be strictly regulated to avoid risks like misuse, misinformation, job disruption, and unpredictable behavior. They support strong safety rules, testing, and global regulations before AI becomes more powerful.

The other group feels too many restrictions could slow down innovation. They argue that AI can solve big global problems like healthcare, education, and climate change, and limiting it too early may stop progress.

Today, different companies are following different approaches, which has created a kind of silent competition in AI development. This is no longer just a technical issue—it is becoming a question of global leadership and future control of technology.

In simple terms, the world is trying to decide how much freedom AI should have while still keeping it safe and beneficial for humanity.

⚠️ Why This Debate Matters to the World

AI is growing much faster than expected, and that’s why this debate is becoming so important. These systems are no longer simple tools—they are starting to influence jobs, privacy, and decision-making in society.

Experts warn that if AI is not properly controlled, it could increase risks like misinformation, job losses, and ethical problems. At the same time, too much restriction could slow down progress in important areas like medicine, science, and technology.

This creates a serious balance problem: safety on one side, and innovation on the other. That is why the AI safety debate is now seen as a global issue affecting the future of technology and society.

⚙️ How AI Control Systems Actually Work

AI control systems are designed to make artificial intelligence safer, more reliable, and aligned with human intentions. This is done using multiple layers of protection rather than a single method.

At the core, developers use safety filters and alignment training, where AI models are trained to avoid harmful, illegal, or dangerous outputs. Along with this, human feedback loops are used—real people review AI responses and guide the system toward more accurate and responsible behavior over time.

There are also policy-based restrictions and guardrails that limit what the AI can say or do in sensitive situations. These rules act like boundaries that the model should not cross, even when it is generating complex responses.

However, the challenge is that AI systems are becoming more advanced and capable of reasoning in unexpected ways. As models improve, they sometimes produce outputs or behaviors that are harder to fully predict or control with fixed rules.

Because of this, researchers are still debating the best approach. Some believe stronger control layers are needed, while others feel too many restrictions can reduce AI’s usefulness and intelligence.

In the end, the main question remains unresolved: how much control is enough to keep AI safe, without limiting its potential?

🌍 Real-World Impact on People and Industries

The AI safety debate is no longer limited to researchers or technology companies—it is now directly affecting everyday life around the world. Across industries, businesses are rapidly adopting AI to automate routine tasks, improve efficiency, and reduce operational costs. This shift is already changing the structure of work, especially in areas involving repetitive or predictable jobs.

As AI becomes more capable, many organizations are integrating it into decision-making processes such as customer support, data analysis, finance operations, and even hiring systems. While this improves speed and productivity, it also raises concerns about job displacement and the future role of human workers in the economy.

At the same time, the rise of AI-generated content has created a new challenge: misinformation. Fake news, deepfakes, and automatically generated content are becoming more realistic and harder to detect. This is creating serious trust issues across media platforms, educational systems, and even public communication channels.

Governments and institutions are now struggling to verify what is real and what is AI-generated, which adds another layer of complexity to information security and public awareness.

Because of these combined effects, the outcome of the AI safety debate will not stay in theory—it will directly influence how people work, learn, communicate, and make decisions in the coming decade. The balance between innovation and control will shape the future of global industries and daily human life.

🚀 What Happens Next in the AI Control Race

The future of artificial intelligence will largely depend on how the global debate around control and innovation unfolds in the coming years. This is not a short-term discussion—it is a long-term decision that could shape the direction of technology for decades.

If stronger regulation and strict control measures are prioritized, AI development may become more structured, safer, and carefully monitored. In this scenario, progress might slow down, but the risk of harmful outcomes, misuse, and unpredictable behavior could be significantly reduced. Governments and safety organizations would play a bigger role in guiding how AI systems are built and deployed.

On the other hand, if open innovation and rapid development are given priority, AI technology is likely to advance much faster. This could lead to major breakthroughs in science, healthcare, education, and industry. However, it would also increase the chances of risks such as misinformation, job disruption, privacy concerns, and ethical challenges.

Both approaches come with clear benefits and serious risks, which is why there is no simple or universal agreement on the best path forward.

At this stage, the world is closely observing how major technology leaders, researchers, and policymakers respond. The decisions made now will not only influence the tech industry but also shape how future generations interact with intelligent systems in their daily lives.

🧠 VALUE INSIGHTS

- AI is evolving faster than regulations

- No global agreement on AI control yet

- Safety vs innovation is the biggest conflict

- AI errors can scale instantly worldwide

- Job automation is accelerating rapidly

- Misinformation risk is increasing

- Companies are competing silently on AI power

- Human oversight is still essential

- Ethical AI design is still unclear

- The next 5 years are critical for AI direction

🚀 CONCLUSION

The AI safety and control debate is not just a tech argument—it is a defining moment for humanity’s future.

Every decision made today will shape how intelligent machines behave tomorrow.

The world is standing at a crossroads between safety and unlimited innovation.

And the outcome is still uncertain.

📢 CTA

What do YOU think about AI safety and control debate?

This is the kind of news that changes everything —

and we want to hear your thoughts.

Drop your opinion in the comments below.

Share this post with one person who needs to read this.

And if you want to stay ahead of the AI revolution

every single day — follow AI TODAYS NEWS right now.

The future is moving fast. Don’t get left behind.