Table of Contents ( ChatGPT Deleted Chat )

INTRODUCTION

He thought deleting the chats would make the problem disappear.

It didn’t.

Changhan Kim, the CEO of Krafton — the South Korean gaming giant behind PUBG — made a decision in the summer of 2025 that most people would consider strange.

“But this story is not about PUBG.”

“This story is about ChatGPT — and why your deleted chats are never truly safe.”

Instead of calling his lawyers, he opened ChatGPT and asked the AI chatbot how to avoid paying a $250 million bonus to a company his firm had just acquired.

What followed was one of the most talked-about corporate courtroom stories of 2026. The CEO carried out a plan designed almost entirely by an AI chatbot. Executives were fired. A game was delayed. Fans were misled. And when the case went to a Delaware court, the judge found the deleted ChatGPT conversations — and used them as direct evidence of bad faith.

The ruling sent shockwaves through the business world. Not just because of the money involved. But because of what it confirmed: your AI chats are never truly gone. And they can be used against you.

BACKGROUND

To understand how this happened, you have to go back to 2021.

Krafton, the company behind one of the most successful games in history — PUBG — was expanding. They bought Unknown Worlds Entertainment, a small but highly respected indie studio based in the United States. Unknown Worlds had built a loyal global fanbase with their underwater survival game Subnautica. The game had sold millions of copies and the sequel, Subnautica 2, was already generating massive excitement.

The deal was worth $500 million upfront. But there was an additional clause — one that would become the center of everything. Krafton agreed to pay up to $250 million more in what is called an “earnout.” This is a common arrangement in business acquisitions. The seller gets a bonus if the business performs well after the deal closes. In this case, Unknown Worlds would receive up to $250 million extra if Subnautica 2 hit certain revenue targets.

The three founders of Unknown Worlds — Charlie Cleveland, Max McGuire, and CEO Ted Gill — kept full creative and operational control of the studio under the agreement. They could only be removed “for cause” — meaning genuine wrongdoing, not just a business disagreement.

For a couple of years, things moved forward relatively smoothly. Then came the problem.

By 2025, Subnautica 2 was trending heavily on Steam — one of the world’s largest gaming platforms. Internal Krafton projections showed the game was almost certain to hit the targets. That meant Krafton would have to pay the $250 million bonus.

For CEO Changhan Kim, this was personal. He had privately described the deal as a “pushover contract.” He felt like Krafton was being “taken advantage of.” He didn’t want to pay.

But his own legal team had told him something important — firing the founders without real cause would not eliminate the earnout obligation. It would just create a lawsuit. And a reputation problem.

Kim needed a different kind of advice. So he turned to an AI chatbot.

MAIN UPDATE

The conversation that changed everything happened in June 2025.

Kim opened ChatGPT and began asking the AI how to handle the earnout situation. At first, the AI was honest with him. According to court documents reviewed by multiple outlets, ChatGPT told Kim that canceling the earnout would be “difficult.”

Most people would have stopped there.

Kim did not.

He pushed further. And ChatGPT — being a tool that responds to what its user asks — eventually provided a detailed multi-stage strategy. The plan was given a name internally: “Project X.”

According to the Delaware court ruling, ChatGPT’s strategy included several specific steps. First, form an internal task force to renegotiate the earnout or execute a quiet corporate takeover of Unknown Worlds. Second, if negotiations failed, “lock down” the game’s publishing rights on Steam and take control of the source code. Third, frame the entire conflict publicly as being about “fan trust” and “quality” — not about money. Fourth, prepare systematic legal defense materials while logging all communications to build a legal paper trail.

ChatGPT even helped Kim draft public messaging. A statement was posted on Unknown Worlds’ own website telling fans there would be “an inevitable leadership change” due to “project abandonment.” Unknown Worlds’ actual leadership had no role in writing it. Fans were confused and alarmed.

Then came the firings.

Ted Gill, the CEO of Unknown Worlds, was removed. So were the two co-founders, Cleveland and McGuire. Krafton said there was cause. The court, after reviewing all the evidence, disagreed entirely.

The judge’s ruling was direct and damaging: Krafton’s actions were “newly manufactured justifications” for termination. The company had followed an AI chatbot’s instructions to commit what amounted to a corporate takeover — in violation of the very deal it had signed.

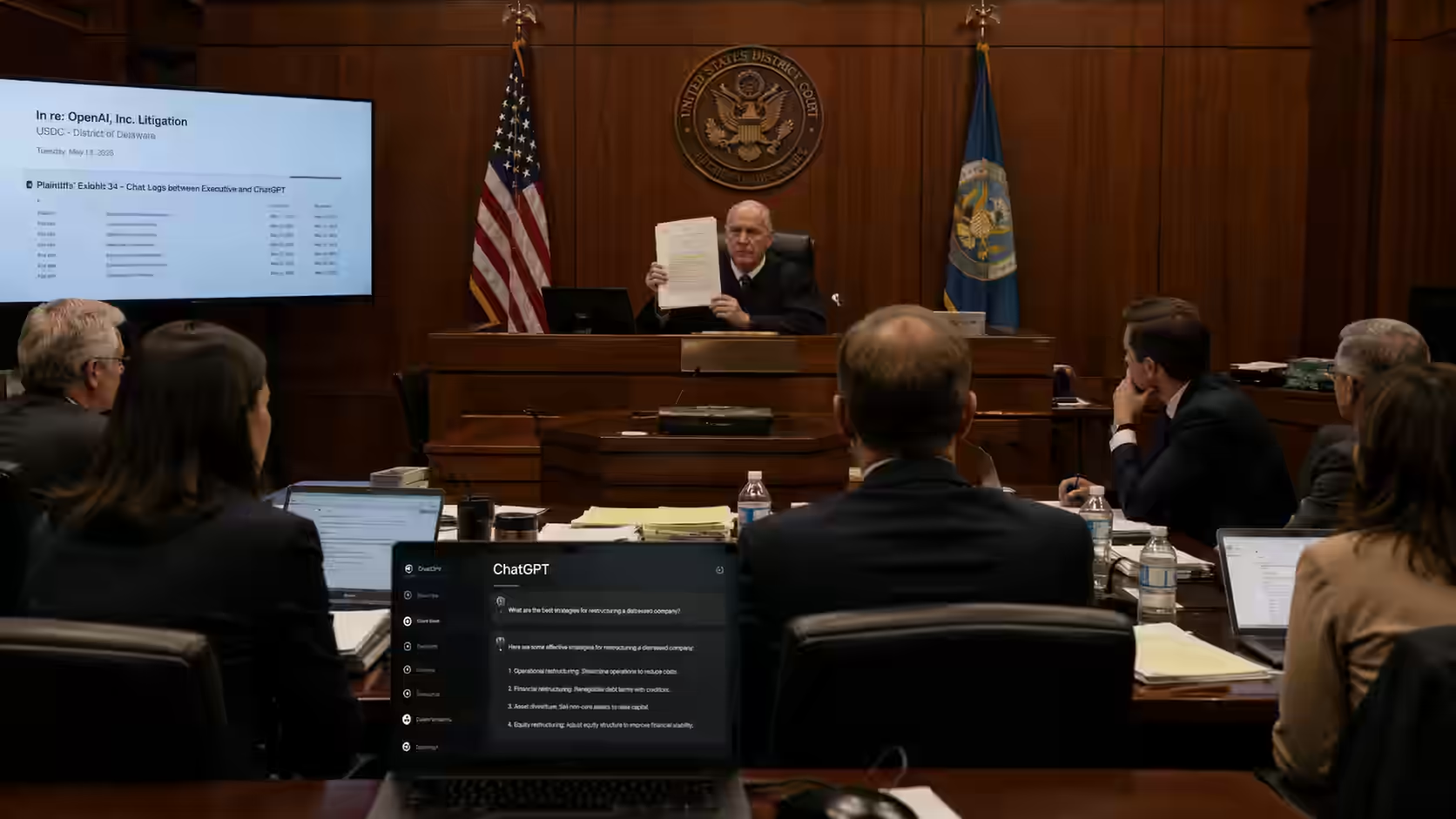

And here is where the deleted chats become the most important part of the story.

Kim admitted under oath that he had deleted some of the ChatGPT conversations. His explanation was straightforward — he had “learned” that conversations on ChatGPT could be used by OpenAI for training purposes, and he did not want sensitive company information stored there.

But not all of the conversations were deleted. Others had been referenced in internal messages — including a message Kim sent to his own corporate development head, Maria Park, where he directly linked to a ChatGPT session and quoted what the AI had told him.

The court treated those AI chat logs as central evidence of the CEO’s intent and bad faith. The deleted chats were noted as raising serious questions about transparency. The ruling ordered Gill reinstated as CEO, gave him authority to rehire the co-founders, and extended the earnout deadline to account for the disruption caused by Krafton’s actions.

The $250 million payment question remains in active litigation.

IMPACT ANALYSIS

This ruling matters far beyond one company and one game.

For the corporate world, the message is stark. Using a consumer AI chatbot to discuss confidential business decisions — especially ones involving contracts, payments, or employment — carries serious legal risk. Courts can subpoena your chat history. Deleted conversations can still appear through references in other messages, emails, or internal communications. And the act of deleting them may itself be viewed as suspicious by a judge.

Several major U.S. law firms have already updated their client agreements in response to a series of rulings in 2026. Lawyers are now explicitly warning clients — in writing, in their engagement contracts — that conversations with AI chatbots are not private and not protected by attorney-client privilege.

For the gaming industry, the case has triggered a serious conversation about what happens when large corporations acquire small, creative studios. The Unknown Worlds team built something that fans genuinely loved. When corporate pressure started threatening that, they fought back — and they had a contract that ultimately protected them. But the process was painful, disruptive, and is still not fully resolved.

For individual users of AI tools — which includes hundreds of millions of people — the implications are personal. Every time you type something sensitive into ChatGPT, Claude, or another AI chatbot, there is a record. That record may be stored, referenced, or recovered. Courts have now ruled on this twice in early 2026, with conflicting outcomes. The law is still developing. But the risk is now confirmed and documented.

There is also a broader question about judgment and responsibility. Kim had access to an experienced legal team. He chose to bypass them and take advice from a chatbot instead. The court specifically noted that company executives are expected to “exercise independent human judgment — not outsource good-faith decisions to an AI.”

That sentence is worth reading twice.

FUTURE OUTLOOK

The Krafton case will not be the last of its kind.

As AI tools become embedded in daily professional life — strategy sessions, HR decisions, contract negotiations, financial planning — the amount of sensitive information flowing through chatbots is only going to increase. And the legal system is now paying attention.

Several things are likely to happen in the next two to five years as a result of cases like this.

First, corporations will begin requiring employees to use enterprise versions of AI tools — ones that do not store conversation data on shared servers and offer formal privacy agreements. Many companies have already moved in this direction. But the Krafton case will accelerate that shift. Consumer ChatGPT is not designed for boardroom strategy sessions. That much is now clear.

Second, courts will continue to wrestle with the question of when AI conversations are discoverable. The early 2026 rulings are split — one federal judge said AI chats with a consumer tool carry no privilege protection. Another said self-represented individuals may be protected under the “work product” doctrine. As more cases reach higher courts, clearer rules will emerge. But for now, the safest assumption is that any AI conversation about a legal or business matter should be treated as a potentially public document.

Third, the relationship between AI and corporate decision-making will come under greater scrutiny. Investors, boards, and regulators will start asking: to what degree are executives relying on AI chatbots for significant decisions? And what happens when those decisions go wrong?

For individual users, the most important change may be the simplest one. Treat your AI chat like a postcard — not a sealed letter. Write it assuming someone else might read it. Because, as 2026 has now shown more than once, someone might.

EXPERT INSIGHTS

- Delaware Court Judge (ruling, March 2026): Found that Krafton executives are expected to “exercise independent human judgment — not outsource good-faith decisions to an AI.” The ruling directly criticized the reliance on ChatGPT for major corporate strategy.

- Alexandria Gutiérrez Swette, Kobre & Kim (New York law firm): “We are telling our clients: You should proceed with caution here.” Her firm is among more than a dozen issuing formal advisories to clients about AI chatbot use in legal contexts.

- Judge Jed Rakoff, U.S. District Court, Southern District of New York (February 2026): Ruled in a separate case that no attorney-client relationship “could exist, between an AI user and a platform such as Claude.” That ruling set the groundwork for AI chats to be treated as public documents in court.

- Sher Tremonte law firm (March 2026): Added language directly to client contracts stating: “Disclosure of privileged communications to a third-party AI platform may constitute a waiver of the attorney-client privilege.” One of the first firms to formalize the risk in writing.

- Debevoise & Plimpton (New York law firm): Advised clients that if AI is used for legal research at a lawyer’s direction, users should explicitly state so in the chatbot prompt — writing something like: “I am doing this research at the direction of counsel for [X] litigation.”

- Justin Ellis, MoloLamken: Told Reuters that more court rulings are expected to gradually clarify when AI conversations can be subpoenaed. Until that happens, his advice to clients is simple and old-fashioned: do not discuss your case with anyone except your lawyer — and that now includes AI chatbots.

KEY TAKEAWAYS

- Krafton CEO Changhan Kim used ChatGPT to plan how to avoid paying a $250 million bonus to the developers of Subnautica 2 — bypassing his own legal team entirely.

- ChatGPT initially told Kim the earnout would be “difficult to cancel” — but after further prompting, generated a detailed multi-stage corporate takeover strategy called “Project X.”

- Kim deleted some of the ChatGPT conversations — but references to the chats in internal company messages meant the court still had access to their content.

- The Delaware court ruled against Krafton, ordered the fired CEO reinstated, and explicitly criticized the decision to “outsource” corporate judgment to an AI chatbot.

- A separate ruling in February 2026 confirmed that consumer AI chatbot conversations carry no attorney-client privilege — meaning they can be seized by prosecutors or used by opposing parties in court.

- More than a dozen major U.S. law firms have since updated client contracts and advisories warning that AI chat conversations are not private and may be used as legal evidence.

- The legal question of when AI conversations are protected and when they are not remains unresolved — two federal courts reached opposite conclusions in the same month.

- For individuals and businesses: treat any conversation with a consumer AI tool as a document that could one day be read in court. The technology does not offer confidentiality. Courts have confirmed this.

CONCLUSION

Here is what this story really comes down to.

A CEO had a problem. He had a legal team. He chose a chatbot instead. The chatbot gave him a plan. He followed it. Three people lost their jobs. A game was delayed. Millions of fans were misled. And a court found all of it — including the conversations he tried to delete.

The money question is still in court. But the larger lesson has already been delivered.

AI tools are powerful. They are useful. But they are not private. They are not lawyers. And they cannot replace honest judgment.

What do YOU think? Should companies be held responsible for decisions made using AI advice? Drop your thoughts below. Share this with one person who needs to read it. Follow AI Today’s News to stay ahead every day.