Table of Contents ( ChatGPT Privacy )

INTRODUCTION

Most people assume their AI conversations are private. You type something personal. You ask something you would never say out loud to another person. And you assume it stays between you and the machine.

That assumption may have just been seriously questioned.

A new class-action lawsuit filed in the United States claims that user activity on ChatGPT — one of the most widely used AI platforms in the world — may have been shared through tracking systems connected to Meta and Google. The allegations have not been proven in court. OpenAI has not publicly confirmed these claims. But the lawsuit alone has been enough to spark one of the largest data privacy conversations the AI industry has ever seen.

And honestly, for good reason.

People use ChatGPT to discuss their health problems, their relationship struggles, their business plans, their fears. If any of that information traveled further than it was supposed to, that is not a minor technical detail. That is a fundamental breach of trust between humans and the AI tools they have started to rely on every single day.

This story is still developing. But here is everything that is known right now.

STORY BACKGROUND

To understand why this lawsuit matters so much, you need to understand how the internet’s data economy actually works.

When you visit almost any website or use almost any app, invisible systems called trackers and pixels collect information about what you did, when you did it, and sometimes even what you typed. This technology has been a normal part of the internet for over two decades. Companies like Meta and Google built enormous advertising businesses largely on the back of this kind of data collection.

For years, privacy researchers have raised concerns about how far this tracking extends. Does it follow people into their email? Into their health apps? Into their private messages?

When ChatGPT launched in late 2022, it changed the way millions of people interact with technology almost overnight. By 2024, OpenAI was reporting hundreds of millions of active users. People started using it not just for work tasks and research, but for deeply personal things. Someone would describe their medical symptoms and ask what it might mean. Someone else would talk through a difficult family situation. Students would confide their anxieties. Professionals would share confidential business strategies.

The implicit understanding was that these conversations were between the user and the AI. Not stored in ways that could be linked to advertising platforms. Not accessible to third parties with commercial interests.

That understanding is exactly what the new lawsuit is now challenging.

The legal action specifically alleges that certain tracking tools embedded within the ChatGPT web infrastructure may have sent behavioral data — information about how users interact with the platform — to Meta’s Pixel system and Google’s analytics services. These are the same tools used across millions of websites to track user behavior for advertising purposes.

The lawsuit does not claim that the actual text of conversations was shared verbatim. The allegations center more on behavioral and session data. But in the world of modern data analysis, even behavioral patterns can reveal a great deal about what a person was doing or discussing online.

This distinction matters legally. But for ordinary users, it may feel like a fine line that does not offer much comfort.

MAIN UPDATE

The lawsuit was filed as a class action, which means it is being brought on behalf of a large group of affected users rather than a single individual. Class action suits of this kind are common in United States courts when a large number of people are believed to have experienced the same harm from a single company’s actions.

The core allegation is specific. The plaintiffs claim that when users interacted with ChatGPT through its web interface, tracking code present on the platform may have sent data signals to Meta’s Pixel — a widely used advertising tool that Meta provides to websites — and to Google’s analytics systems. These signals could have included information such as pages visited, session durations, and interaction patterns.

For context, Meta Pixel is a piece of code that website owners voluntarily embed on their sites to track visitor behavior and optimize advertising campaigns. It is used by millions of websites globally. When it is present on a page where sensitive activities happen, it can potentially collect information that users would not expect an advertising platform to receive.

The lawsuit does not allege that OpenAI deliberately sold user conversations. The claim is more about whether the standard tracking infrastructure present on the platform was configured appropriately for a service where users regularly share highly sensitive personal information.

OpenAI has not issued a detailed public response to the specific claims in the lawsuit at the time of writing. The company has stated in its general privacy documentation that it takes user privacy seriously and that it works to protect user data. Legal representatives have indicated the company intends to defend against the claims.

This distinction is important. Nothing has been proven. A lawsuit being filed is not the same as a court finding that wrongdoing occurred. The legal process will take time, and the outcome is genuinely uncertain.

What is not uncertain is the reaction.

Within hours of reports about the lawsuit spreading, discussions erupted across Reddit, X, and technology forums. Many users expressed that they had not realized tracking systems of this kind might be active on the ChatGPT platform. Others noted that they had shared medical details, financial information, or relationship struggles through the service with an expectation of privacy that now felt less secure.

Privacy researchers who have been raising concerns about AI data practices for years pointed to the lawsuit as evidence that the industry needs clearer standards and stronger independent oversight — not just company-written privacy policies.

IMPACT ANALYSIS

If the allegations in this lawsuit are even partially upheld in court, the implications extend well beyond OpenAI.

The entire AI industry has been expanding rapidly with relatively limited privacy regulation specifically designed for AI-powered platforms. Most AI companies currently operate under general data protection laws like GDPR in Europe and various state-level privacy laws in the United States. But none of these frameworks were written specifically with AI conversational systems in mind.

A successful lawsuit of this kind could push legislators to move faster on AI-specific privacy legislation. Several proposed bills have been sitting in various stages of review in the United States Congress and European Parliament. A high-profile case involving one of the most recognizable AI brands in the world would give those efforts significant political momentum.

For everyday users, the immediate impact is a shift in awareness. Many people who had been using ChatGPT for sensitive conversations may now reconsider what they share. This kind of behavioral change — even if it turns out the risk was smaller than feared — represents a real cost to the genuine utility these tools can provide. People make better use of AI assistants when they feel safe enough to be honest and specific in their queries.

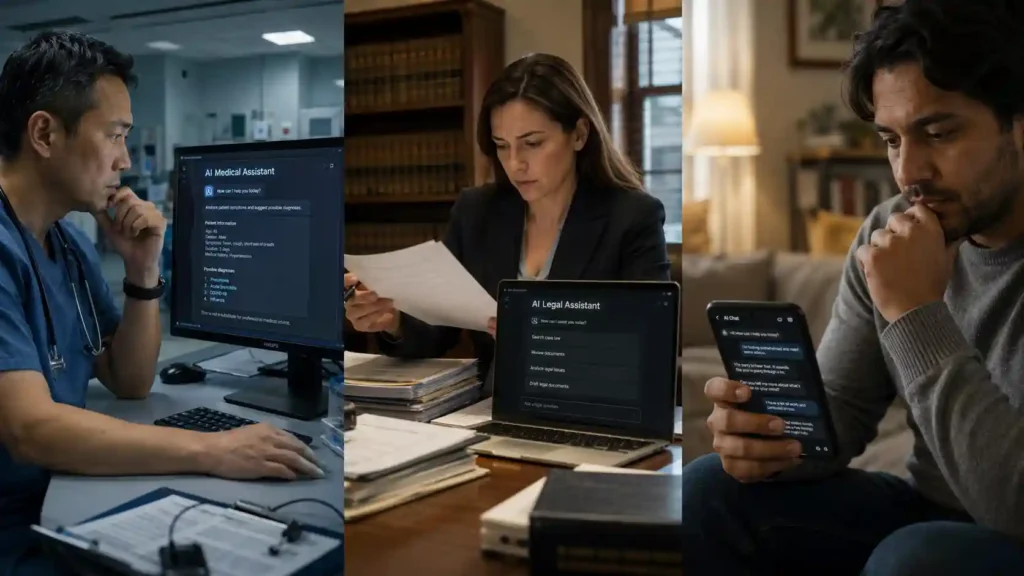

For the healthcare and legal industries, this raises particularly serious questions. Both sectors have been actively exploring how AI tools can assist professionals in their work. Lawyers drafting case strategies, doctors discussing patient presentations, therapists researching treatment options — all of these use cases depend on an assumption of confidentiality that this lawsuit has placed under public scrutiny.

For competitors and the broader tech industry, the lawsuit creates both risk and opportunity. Companies that can credibly demonstrate stronger privacy protections may see this as a moment to differentiate themselves. But the industry as a whole will face increased skepticism from users who are now asking questions they were not asking six months ago.

FUTURE OUTLOOK

The outcome of this specific lawsuit will take months or possibly years to determine. Legal processes move slowly. But the conversation it has started is already accelerating on its own timeline.

Privacy advocates have been arguing for years that the AI industry needs to be held to a different standard than traditional software. The reason is straightforward. When you interact with a search engine, you type a query. When you interact with a conversational AI, you often explain your situation, describe your problem, reveal your fears, and ask for guidance that you might otherwise only seek from a doctor, a lawyer, or a trusted friend.

The intimacy of that interaction makes the privacy stakes higher — not lower — than in most other digital contexts.

In the next one to two years, regardless of how this specific lawsuit resolves, the pressure on AI companies to implement clearer, independently verifiable privacy practices will almost certainly increase. The European Union’s AI Act, which has already begun taking effect, includes provisions that may become relevant to cases like this. Several U.S. states have been moving toward stronger digital privacy laws.

For AI companies, the strategic calculation is changing. Being trustworthy used to be a background condition — something you claimed in a privacy policy and rarely had to demonstrate publicly. Going forward, trust is likely to become a competitive asset that companies will need to actively build and maintain through transparent practices and third-party verification.

For users, the most honest advice is also the most practical. Until clearer standards exist, being thoughtful about what you share with any AI platform is simply good digital hygiene. This does not mean avoiding these tools — they are genuinely useful. It means understanding that the same common sense you apply to email and social media should also apply to AI conversations.

The technology is not going away. The privacy conversation around it is only getting louder.

EXPERT INSIGHTS

- Privacy researchers who have studied tracking pixels note that Meta Pixel and similar tools are embedded across a vast range of websites, often without users being clearly informed about what data those tools collect.

- Legal analysts observing the case suggest that the class-action structure is significant — it signals that the plaintiffs believe a large, identifiable group of users experienced the same potential harm.

- Digital rights organizations have pointed out that current privacy laws in the United States were not designed with AI conversational platforms in mind, leaving a meaningful regulatory gap.

- Healthcare privacy specialists have raised concerns that if behavioral data from AI health-related conversations were captured by advertising infrastructure, it could conflict with the spirit of medical confidentiality even if not directly violating existing law.

- Technology policy experts predict that this lawsuit, regardless of outcome, will be cited in upcoming legislative debates around AI governance in both the U.S. and Europe.

KEY TAKEAWAYS

- A class-action lawsuit alleges ChatGPT user activity may have been shared with Meta and Google through tracking systems — these allegations are unproven in court.

- The claims focus on behavioral and session data, not necessarily the text of conversations.

- OpenAI has not confirmed the allegations and has stated it intends to defend against the claims.

- This case highlights a gap in existing privacy law that was written before AI conversational tools existed at scale.

- Healthcare, legal, and financial professionals who use AI tools for sensitive work face heightened questions about data confidentiality.

- The lawsuit has already triggered widespread public concern about AI privacy regardless of its eventual legal outcome.

- Users are advised to apply the same thoughtful discretion to AI conversations that they apply to email and social media.

- Regulatory pressure on the AI industry around privacy practices is expected to increase significantly in 2026 and beyond.

- Trust in AI platforms is emerging as a competitive differentiator — companies that can demonstrate genuine privacy protections may gain a meaningful advantage.

- This is an ongoing story — the full legal and regulatory consequences will develop over months and years ahead.

CONCLUSION

The ChatGPT privacy lawsuit is not a confirmed scandal. Nothing has been proven. The legal process will take time, and the outcome is genuinely uncertain.

But what this moment has already proven is that people care deeply about what happens to the private thoughts they share with AI systems. They should care. These tools have become intimate in a way that most technology never has been. And that intimacy demands a higher standard of accountability.

Whether OpenAI is ultimately found to have done anything wrong or not, this case has opened a conversation that the entire AI industry needed to have — openly, honestly, and with users at the center of it.

The AI revolution is still happening. It just got a little more complicated.

What do YOU think about AI privacy? Drop your honest thoughts in the comments below. Share this with one person who uses ChatGPT and needs to read it. And follow AI Todays News to stay ahead of every major AI story before anyone else.